Testing and QA of AI-Native Solutions

Specialized quality assurance for systems that don’t behave like traditional software

As Finland’s leading software quality company, we help build trust in AI-native systems, the ones where behavior isn’t fully predictable, outputs can’t always be directly verified, and quality isn’t achieved by testing alone.

Whether you need a single specialist for a specific challenge or end-to-end QA coverage, we can help.

Why?

In traditional software testing, the same input always produces the same output. In AI-native systems, that’s not always the case: a model may produce different responses in different contexts, behave unexpectedly in edge cases, or degrade silently over time as its environment changes.

This fundamentally changes what quality assurance means. It’s no longer just about confirming correctness, it’s about building trust in a system whose behavior can’t be fully explained. Without clear, measurable evaluation criteria, quality gaps accumulate invisibly and only surface when a user encounters a critical failure.

A well-tested AI system is one that can be trusted – by developers, by the business, and by end users. In practice, this means: the system produces consistent and purposeful outputs, its performance remains stable in production, and the key risks – hallucinations, bias, inconsistent behavior – have been identified and managed before they cause harm.

Beyond reliability, the EU AI Act places growing requirements on documentation, risk classification, and quality assurance for AI systems. Rigorous testing is the foundation of compliance.

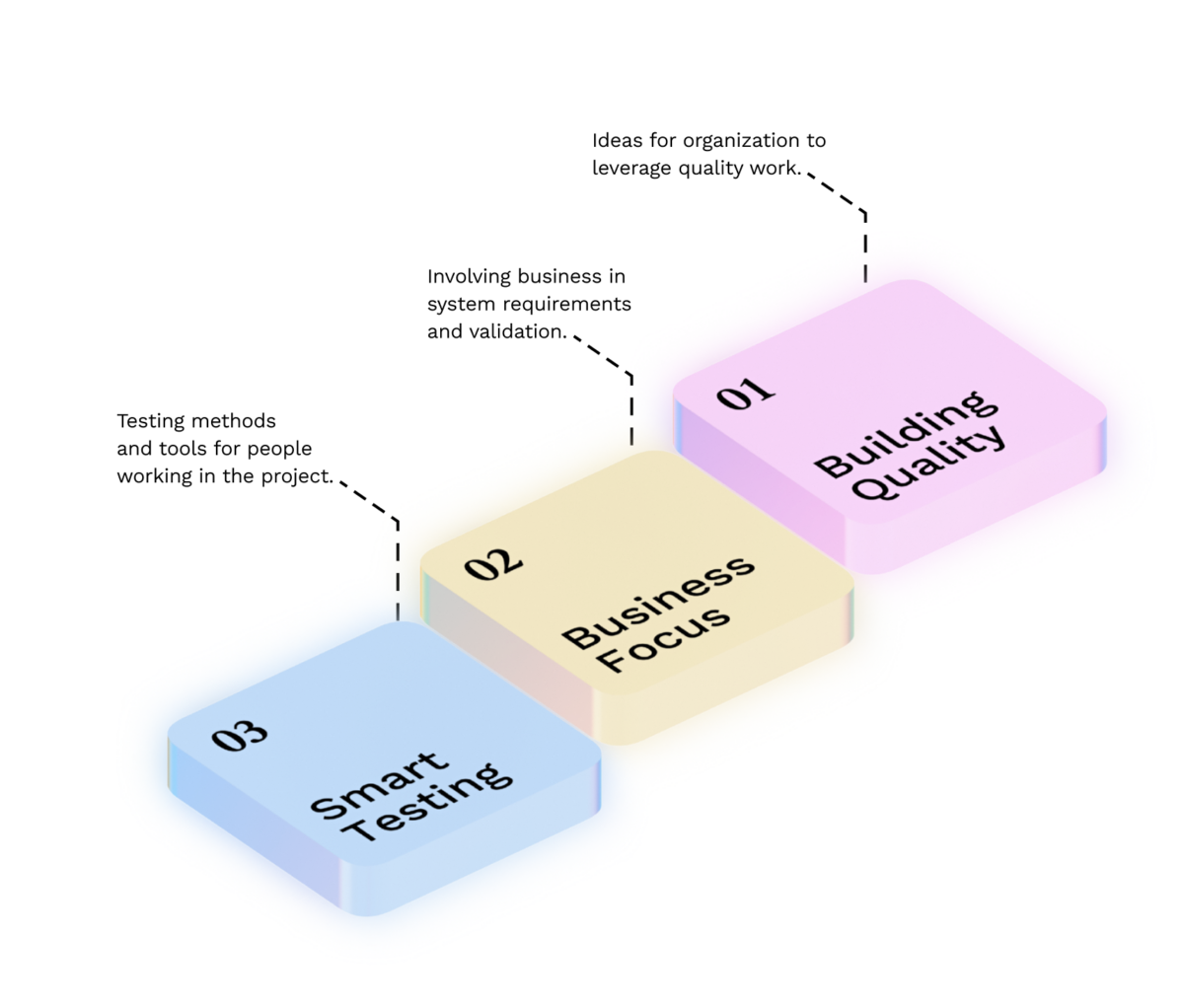

Our entire process is based on the VALA Quality Model. Quality assurance for AI-native systems extends to all levels of the model: it starts with building quality in – ensuring clear evaluation criteria and testability – covers aligning with business objectives, and concludes with practical testing and continuous monitoring. Learn more about the VALA Quality Model in detail here.

Want to know how we can help ensure the reliability of your AI system? Get in touch!

AI cannot fully validate itself

AI produces plausible outputs. Quality assurance looks for what is wrong, missing, or misunderstood. These are opposing perspectives.

Asking AI to evaluate its own output doesn’t close the quality gap, it hides it behind false confidence. QA for AI-native systems requires a human: someone who defines measurable quality goals, builds an evaluation framework, designs the test data, and interprets results critically.

Read our white paper to learn more about testing AI systems and using AI in testing!

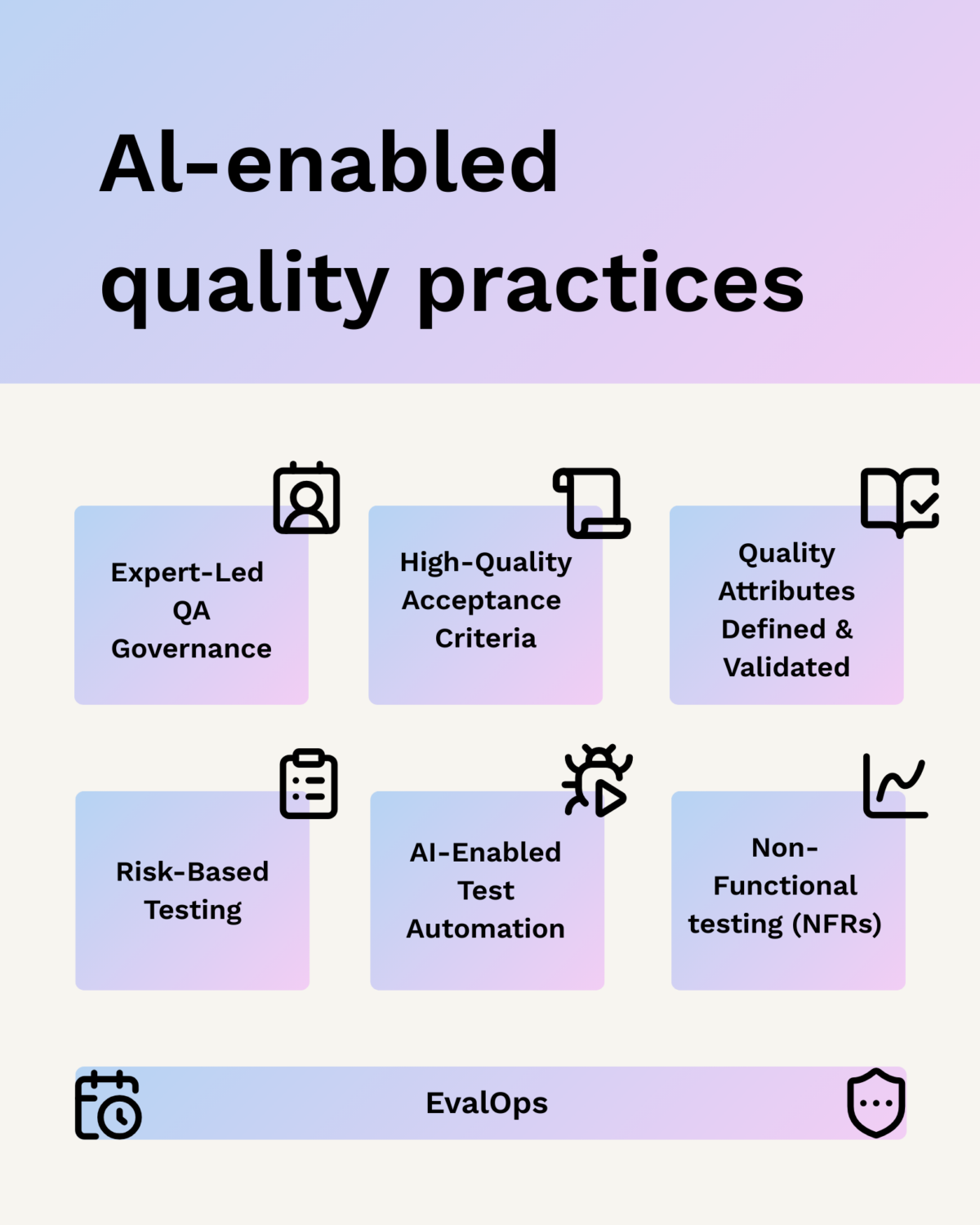

In the AI era, QA is more vital than ever: clear acceptance criteria now guide both humans and autonomous agents in code and test generation. Quality attributes must be defined early to steer AI-generated solutions effectively. Finally, EvalOps ensures continuous quality by integrating evaluation into every stage to measure and improve model behavior.

VALA’s service for testing AI-native systems

How we can help in practice?

VALA’s specialists bring the same quality assurance fundamentals to AI-native system testing as to everything else we do, complemented by deep understanding of what makes AI systems uniquely challenging to test.

We build evaluation frameworks that make AI output quality measurable rather than subjective. We design test data strategies that cover real-world use cases, edge cases, and adversarial inputs, because generic test data isn’t enough when system behavior depends on context. We develop methods for testing non-deterministic behavior in cases where traditional expected/actual comparisons don’t suffice.

We also ensure that quality assurance doesn’t stop at launch: an AI model can degrade in production as its environment evolves, so continuous monitoring is a core part of our offering. Where needed, we support documentation and testing practices aligned with EU AI Act requirements.

Why VALA?

We are one of Finland’s largest software quality companies, and as a 2025 Great Place to Work winner, we attract and retain the best specialists in the field. That means our clients get experts who truly know what they’re doing.

Testing AI-native systems is a new specialization, but it’s built on the same foundations as all high-quality testing work: clear objectives, traceable tests, and risk-based prioritization. Over 18 years of experience have taught us what matters most in those foundations, and how to apply them to a new context. Our active expert community continuously shares knowledge on AI system testing, so our clients benefit from the latest and best practices in the field.

Technologies & Tools

We are technology-agnostic; we apply tools and methodologies flexibly based on the customer’s needs and ecosystem.

AI Validation: Model performance and reliability (e.g., Ragas, LangSmith, Promptfoo).

Test Automation: Modern and established solutions, such as Playwright, Robot Framework, and Python-based tools.

Continuous Monitoring: Ensuring operational integrity after release (e.g., Dynatrace and customized monitoring).

Test Management: A seamless part of the team’s daily workflow (Azure DevOps, Jira, Xray).

What to know more about AI’s impact on software testing?

Areas of AI-native system quality assurance

LLM and Generative AI Evaluation

Evaluating large language models requires specialized frameworks. Rather than checking for a single correct answer, we assess consistency, relevance, and safety, often across multiple runs and input variations.

RAG System Testing

In Retrieval-Augmented Generation systems, search and model work together. Testing covers both retrieval quality – does the right information surface at the right time – and the model’s ability to use retrieved content accurately, without hallucination or context misinterpretation.

AI Integration Testing

When an AI component is part of a larger system, integration testing ensures the parts work together as expected, even when the AI’s output is variable in nature.

Non-Deterministic Behavior Testing

Traditional expected/actual comparisons don’t suffice when the same input may produce different outputs on different runs. We develop methods to assess output quality statistically and distinguish acceptable variation from harmful inconsistency.

Bias and Fairness Testing

We verify that the system performs equitably across different user groups, contexts, and input types, and that potential biases are identified before reaching production.

Continuous Monitoring and Drift Detection

An AI model can degrade over time as its environment or underlying data changes. Continuous monitoring and automated alerts ensure quality remains in control well beyond launch day.

Ask for more!