Research: how much can AI increase efficiency in QA?

21.04.2026

We asked 23 VALA people a simple question:

How much has your working efficiency improved because of AI assisted tools?

We also asked the same question as an estimate for one year and five years from now.

This is not a large study but more a small internal snapshot from people who work in QA and test automation every day. Still, it is useful because it shows how QA specialists actually feel about AI in real work.

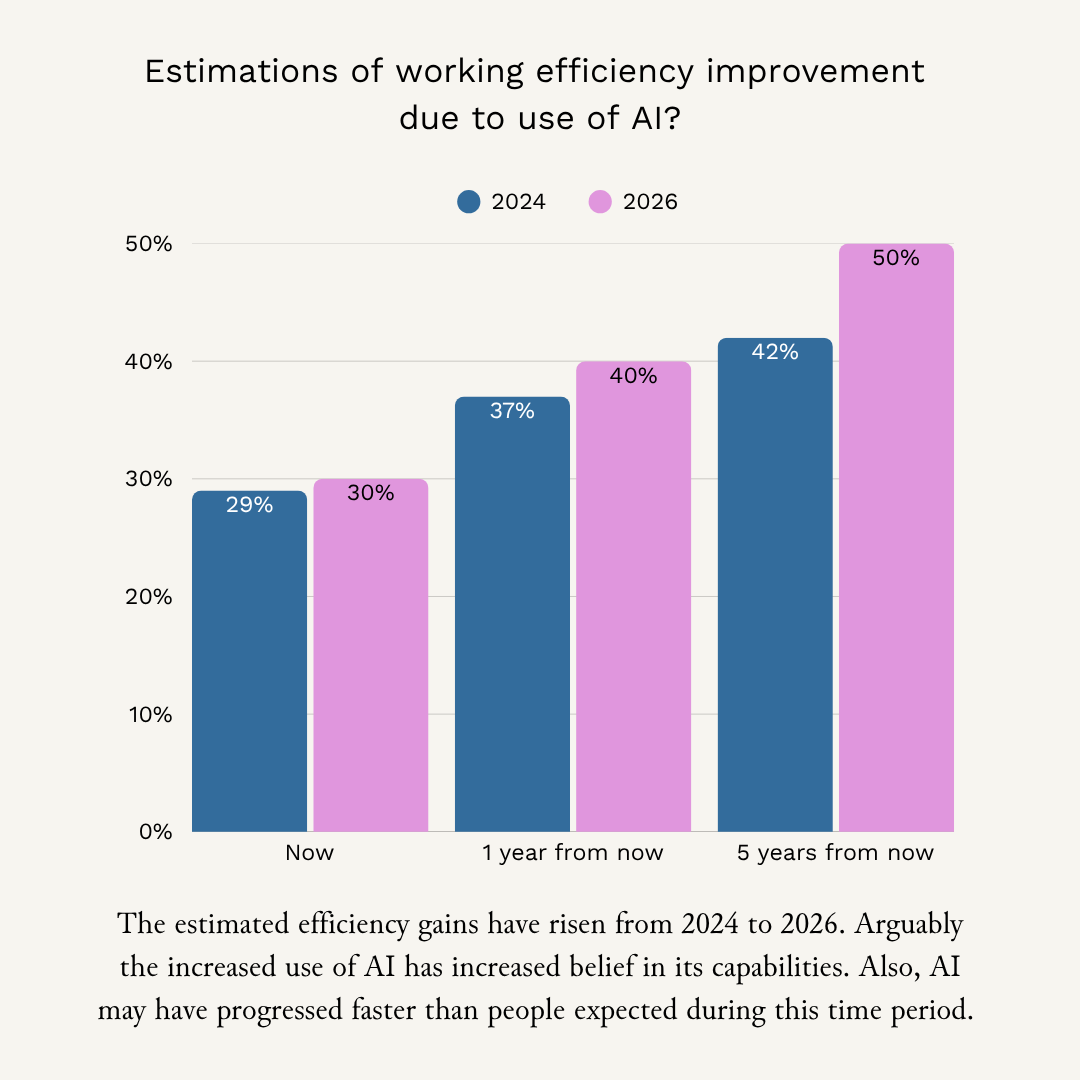

The simple top-line result

- Today: the median answer was 30% improved efficiency

- In one year: the median estimate was 40% improved efficiency

- In five years: the median estimate was 50% improved efficiency

There is a wide spread. Some people reported only 10% today, some reported 60–70%. Also, in some organizations the use of AI tools is very limited or even prohibited and these VALA people probably didn’t answer the survey.

We asked this same question in May 2024. See the below image for the comparison. The estimated efficiency gains clearly rose from 2024 to 2026. The increased use of AI could have increased trust in its capabilities. Also, AI may have progressed faster than people expected during this time period.

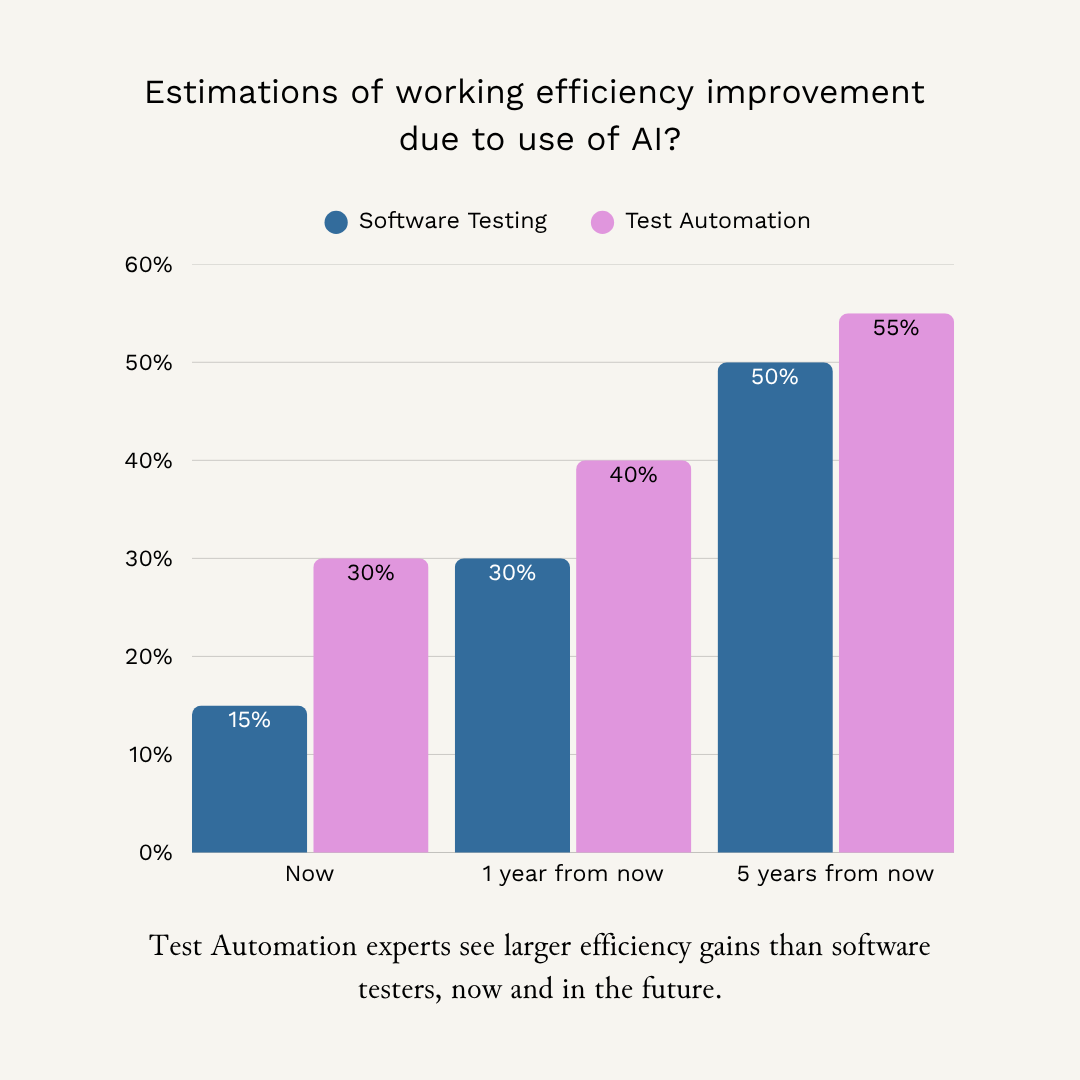

What changes when you look at roles

Our answer group was mostly test automation people (15 out of 23). We also had software testers (5) and test managers (3).

When we split answers by role, a clear pattern shows up:

Test Automation

- Median improvement today: 30%

- Median estimate in one year: 40%

- Median estimate in five years (numeric answers): 55%

Software Testing

- Median improvement today (numeric answers): 15%

- Median estimate in one year (numeric answers): 30%

- Median estimate in five years (numeric answers): 50%

- Note: this group had more “free text” answers and missing answers, so treat these numbers as rough.

Test Management

- The sample is too small to say much. Results were mixed.

What we take from this:

- People closest to code and automation see the biggest gains first.

This makes sense. A lot of AI value is in “text and code work”: writing scripts, generating test ideas, summarising logs, producing checks, cleaning up code, and drafting documentation. - Software testing roles still expect big gains, but they start lower.

Many “software testing” tasks include judgment, context, and communication. AI can support them, but it does not remove the need for a human to decide what matters. - The gap is a leadership problem, not a people problem.

If one group gets 50% benefit and another gets 10%, the right question is:

What use cases, training, and ways of working are we missing for the second group?

How does this compare to external research?

Our numbers are self reported. That means we should be careful. People can feel faster without being faster.

External research shows that AI impact can be large, but it depends on the task and the person:

- McKinsey estimates that the direct impact of AI on software engineering productivity could be 20–45%, mostly by reducing time spent on specific activities like drafting code, refactoring, and root cause analysis.

- A controlled experiment on GitHub Copilot found the group with Copilot completed a coding task 55.8% faster than the control group.

- A large field study in customer support showed AI assistance increased productivity by about 14–15%, with much larger gains for less experienced workers.

But there is also a warning sign:

- A randomized controlled trial by METR on experienced open source developers found that with early 2025 AI tools, developers were 19% slower, even though they believed they were faster. Worth noting is though that this is over a year old trial which is an eternity in the current development speed.

So the honest conclusion is:

AI can create big local speed-ups. But it can also add hidden work (prompting, waiting, reviewing, fixing).

That is why you need measurement in your own context.

What this means for QA leaders

If AI makes development faster, it can also make QA harder.

More AI-assisted coding often means:

- more changes

- larger changes

- less shared understanding of “why this change exists”

- more variation in code and patterns

- more risk that “it works on my machine” becomes “it worked in my prompt”

This is also why we see compliance and evidence needs growing in QA work. When the system changes faster, you need better proof of what changed and why it is safe.As VALA’s AI vision states: AI amplifies existing quality. It does not create quality where none exists.

So the best AI plan for QA is not “buy tools”. It is:

- Pick 2–3 clear use cases per role

Example: for test automation: create checks faster, tackle the flakiness, summarise failures.

For software testing: generate test ideas, review requirements for testability, help write structured bug reports. - Measure with a baseline

Track time spent, defect escape rate, flaky test rate, or lead time to feedback.

Without a baseline, you will not know if you improved or just felt better. - Fix the foundations that AI needs

AI works best when you have:

- clear requirements and acceptance criteria

- accessible test assets

- stable CI pipelines

- good reliable test data

- good logging and monitoring

If these are missing, AI will not save you. It will just show your gaps faster.

What this means for QA specialists

The core QA job does not disappear. It moves.

If AI increases output, QA becomes more about:

- setting quality criteria early (clear specs, clear “done”)

- building checks that scale

- validating that the system behaves safely and reliably

- keeping evidence and traceability

- monitoring and learning from real usage

And one more thing: do not trust your own feeling about speed.

Try to measure. The METR result is a good reminder that smart people can be wrong about their own productivity.

Final words

Our survey suggests that QA people already feel clear efficiency gains from AI today, and they expect those gains to grow.

But the most important result is not a number. It is the gap between roles.

If you want AI to help QA at scale, you need to make it work for:

- test automation

- software testing

- test management

- and the whole delivery system around them